Flat data files such as .csv, .txt, .tsv are popular ways to store and transfer data assets. A spreadsheet program such as Excel or Google Sheet however can have a difficult time accessing very large files. This blog is going to walk you through some tools you can use to open a big CSV file.

What’s considered a big .CSV file?

- First, let's define what is considered "big" for a CSV file,

- Big data files are usually too big to process on a local computer or desktop software tools. The famous 1,048,576 rows limit instituted by Excel is a great example.

- “Regular” data that can be processed by a laptop program within an acceptable time and cost is not considered as “big data”. Big data requires massively parallel computing software running on tens, hundreds, or even thousands of servers.

- Big data typically is heterogeneous data that comes from a variety of sources

What differs big data from regular data is also the way it is collected. It can be aggregated and synthesized from millions of objects in many formats such as video, image, soundtrack, or a production system such as a CRM software. Compared to a traditional database that is set up to record a certain type of event, big data is typically messier. Storing big data in a CSV file is very common. Typically these files can occupy Gigabytes of space in your computer.

Why are some CSV files hard to access?

CSV files can be messy. A typical database program is not designed to handle all the quirks and errors that might come with a corrupted CSV file. In fact, there are hundreds of edge cases with a CSV file that may cause it to be inaccessible. Here are some examples:

- Use of invalid characters like hyphen (-), parentheses (), period (.), colon (;), Asterisks (*), and other characters.

- Line breaks in the column fields

- Separator characters in the text of the CSV files

- Long numbers that have leading zeros

- CSV files not using UTS

- Non-printable ASCII control characters like CR or LF at the end of the line

- Blank lines at the end of the corrupted CSV files

- Duplicate column (data field) names

- Text field with unescaped delimiter

- Quoted string with an unescaped double quote

- CRLF/Dos line endings

- Non-standard escape characters

- Unrecognized Unicode/Illegal Codepoint

How can you access a big CSV file?

Python

Python is a general purpose language, but it comes with many data manipulation packages such as Pandas, Numpy, Scipy etc. To access a big CSV file, you can construct a Pandas data frame to read certain rows.

import pandas as pd

file = '/document/2021.csv'

df = pd.read_csv(file)

print df.head(100)

R, SaS or Matlab

R, SaS or Matlab are programming languages designed for statistics and analytics. To access a large CSV in SaS, you can run the codes below.

filename in "I:\2021.csv";

data _null_;

infile in lrecl=1;

input;

run;

Powershell

If you are a Windows user, here's how you can get a sample for your CSV file. Though it's not good for more in-depth analysis.

$infile = Get-Item .\2021.csv

$reader = New-Object -TypeName System.IO.StreamReader -ArgumentList

$infile

while ($line = $reader.ReadLine())

{

# break after 100

}

$reader.close()

Terminal

If you are a Mac user, Terminal can do a very similar job.

head -100 /User/vincent/2021.csv > /User/vincent/sample.csv

The problem with using either one of the programming languages above is that they all consume a lot of local computational resources. They also take a lot of time to optimize. Another way to do this is through a cloud data warehouse.

The problem with using either one of the programming languages above is that they all consume a lot of local computational resources. They also take a lot of time to optimize. Another way to do this is through a cloud data warehouse.

Cloud database management

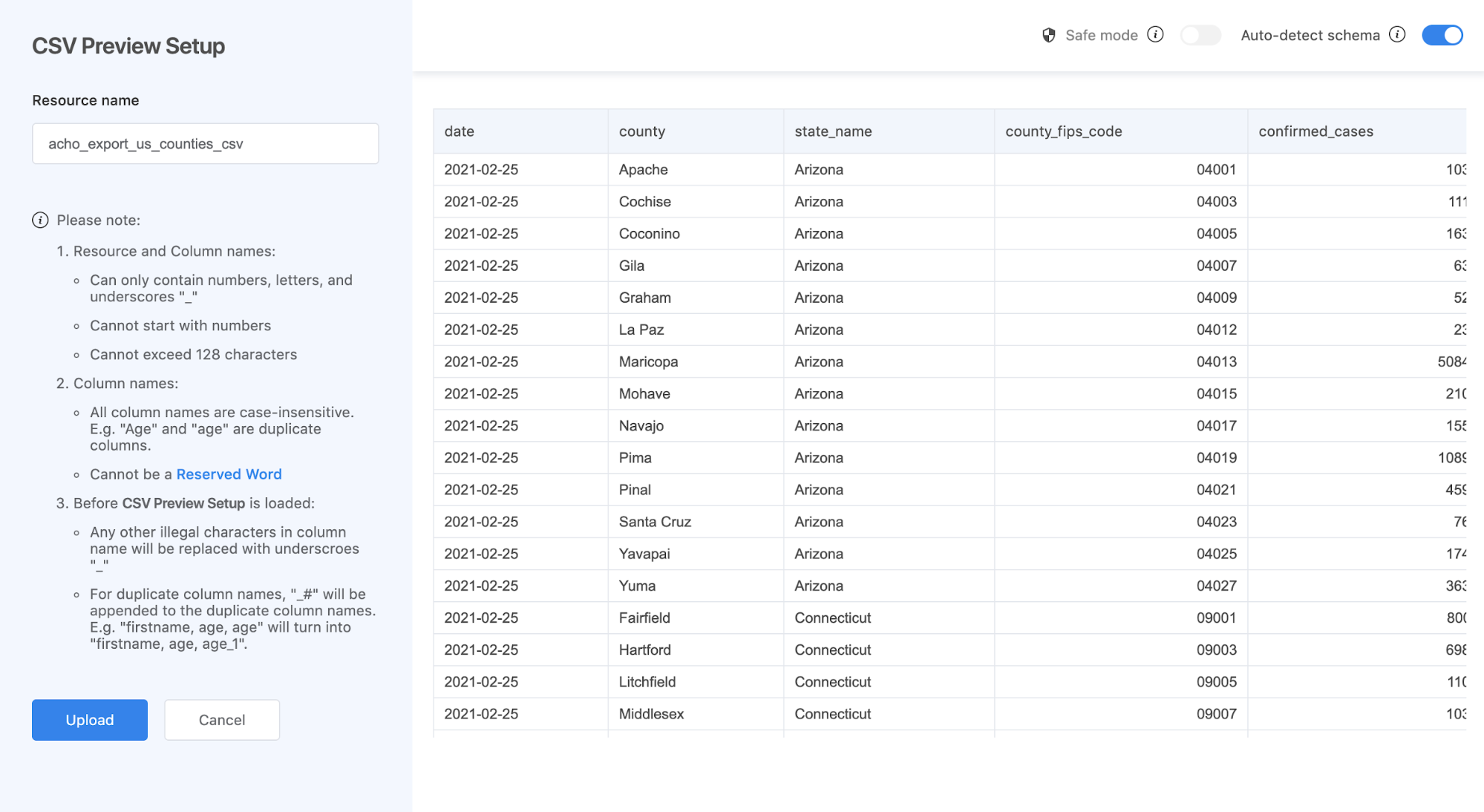

To access these files, cloud computing does a pretty good job. You can first upload the CSV file to Acho Studio. Then you’ll see the preview parser. Make sure that you have all the column types correct and no empty column names. You can also select the auto-parser. Then you can create a project to access this CSV file.I tried processing something that’s over 5 million rows and 600MB in size here. It'd take a minute or two to upload depending on your WIFI network. Processing it wouldn’t take too long either.

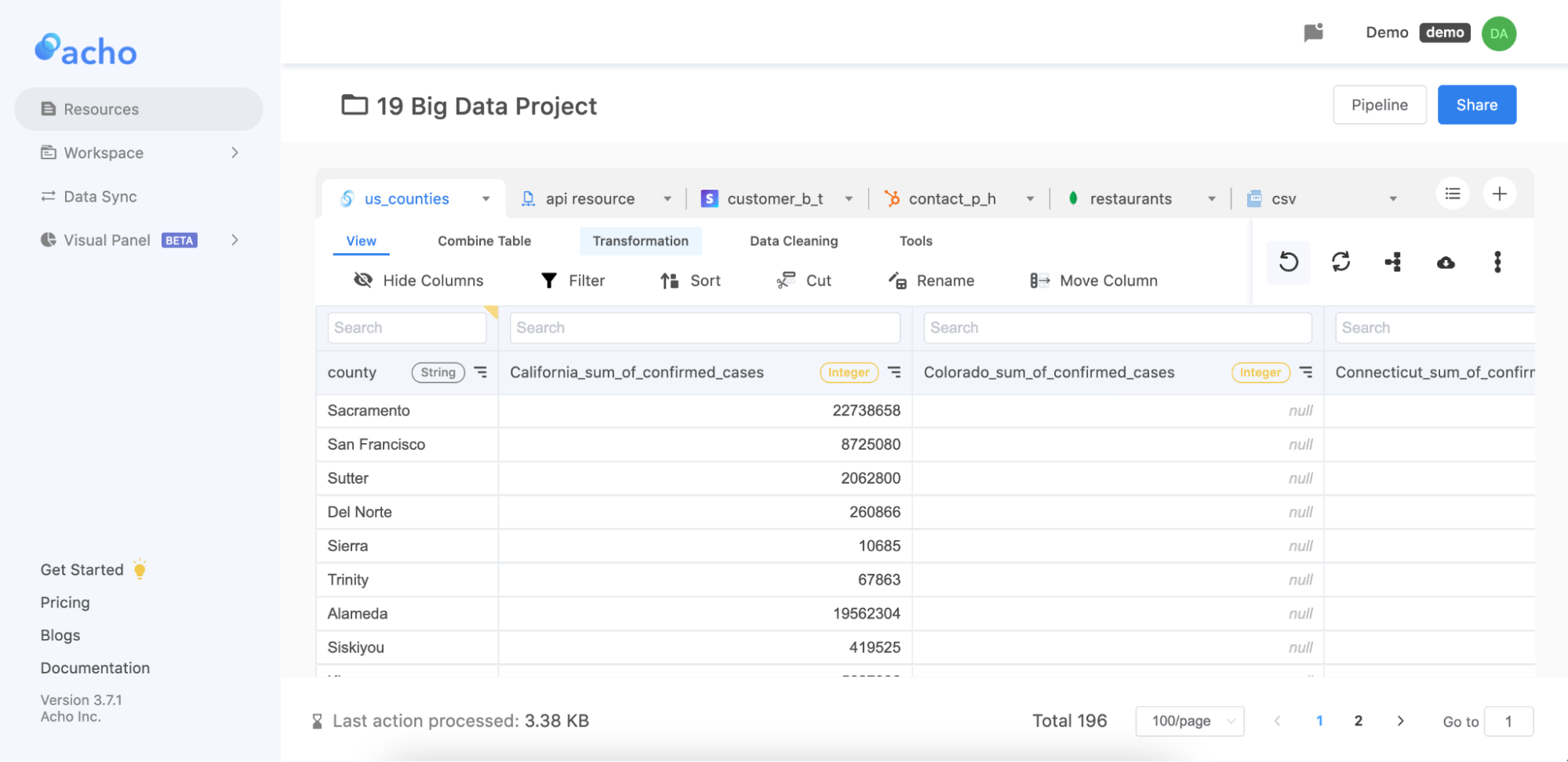

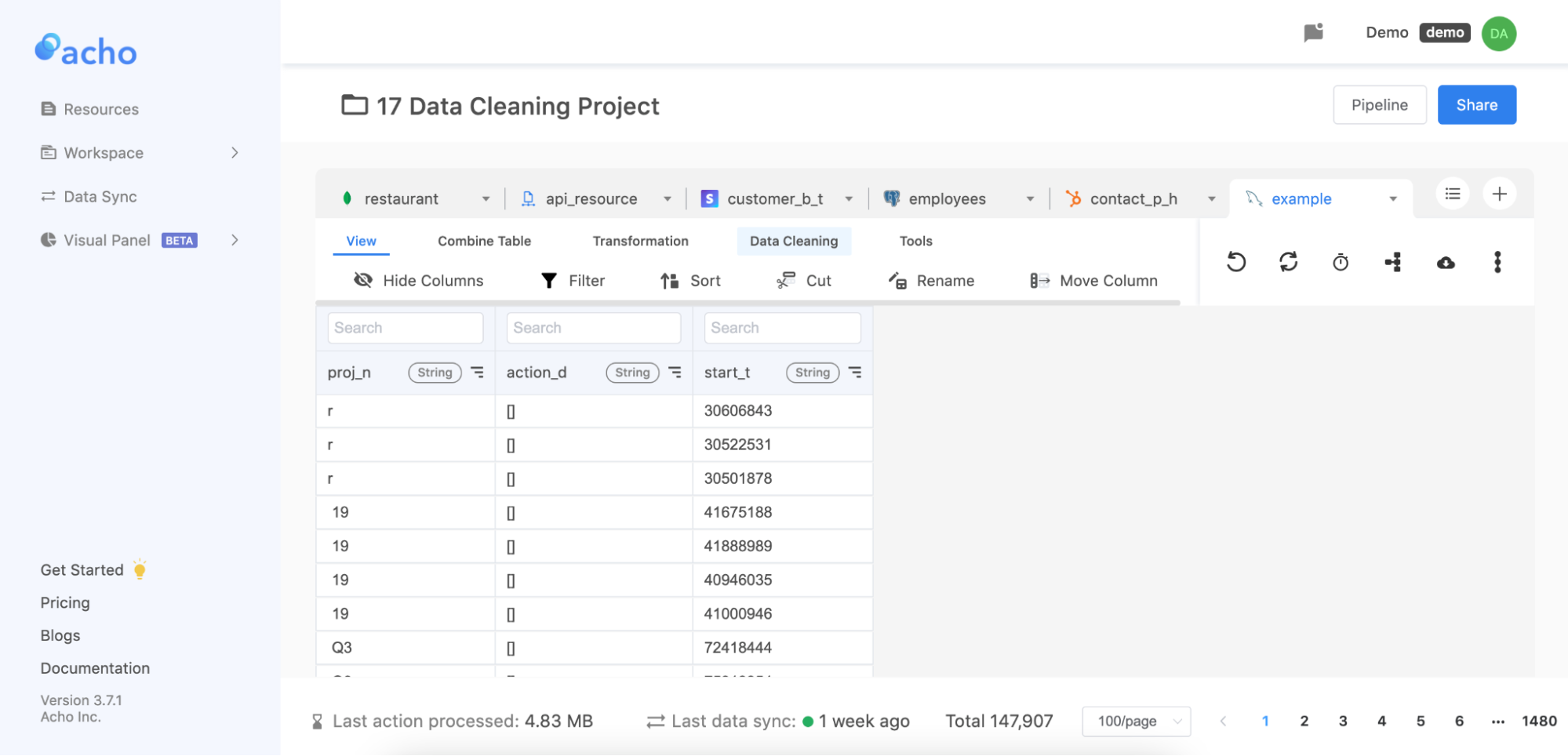

Then you can load it up in a project. You can do many different database manipulations to your dataset such as filter, sort, join, etc. Then you can use the little cloud button on the right to download the CSV. file after you process it.

For example, you can create a pivot table like this like you would on a spreadsheet. There's no limit on how large your file can be.

Where should you store large CSV files?

Our computer’s hard drive can range from 200GB to several TBs. A big CSV file can take up valuable space from your computer. The cloud on the other hand is a lot cheaper, thanks to the data centers and their economy of scale. There are several advantages in cloud storage for your CSV files:

- Cheaper costs: the cheapest hard drive for personal computers in 2021 still cost around $15/TB. Acho offers it for free below 1TB at the moment.

- Faster speed: loading your files on Cloud can be multitudes faster than storing locally

- Better management: you can store all your files in one place

As data is piling up, finding a place to access, process, and store data is becoming more and important for teams across different industries. When it comes to collaboration and sharing, this issue turns out to be more critical. Imagine a team that cannot share the same data project, and needs to constantly deal with compatibility issues. A massive amount of time and energy will be wasted

Can I store CSV files as a database?

A database is an organized collection of records. Unlike a CSV file, a database can support applications and systems that retrieve data in

- Databases can handle and process complex queries for analysis

- Databases can be interfaced by multiple parties with permission controls

- Databases can index a large number of records so it is easier to find a certain record

- Databases can handle multiprocess/multithreaded access.

- Databases can handle access from a network

- Databases can observe, monitor, and test for data quality and integrity

- Databases can update data on schedule

- Databases are reliable

- Databases can handle transactions and concurrent access

- Databases can be manipulated and transformed logically

If you have a lot of CSV files, consider building a database for them so you can take advantage of all the benefits above.

Conclusion

There are many ways for you to work with a large .csv, .txt, .tsv file. Many require technical knowledge in a programming language. Even you are great with Python or R, processing a big file can be computationally expensive. Using Acho Studio allows you to work with many large CSV files at a much faster rate. Though it may not offer all analytical features that SAS, or Python offer yet, you can perform in-depth analysis via the SQL-based actions or using SQL directly on Acho.

For more on this, you can check out a video tutorial here.