What is web scraping?

Web scraping is the process of automatically extracting data from websites using software or tools. This data can be in various formats, such as text, images, videos, or any other structured or unstructured data. Web scraping is often used to extract large amounts of data from multiple websites quickly and efficiently.

How to automate web scraping?

Automating web scraping involves setting up a process that automatically extracts data from websites at regular intervals or as needed. Here are the general steps to automate web scraping:

- Identify the target website:

- Write a scraper

- Host your scraper with a dedicated server

- Set up a scheduler for your scraper

- Store the scraped data

- Build an application to utilize the data

Now, let's take a close look at each step.

1. Identify the target website

First, you have to determine which website or websites you want to scrape data from. You should also check if there are any legal restrictions or terms of service that prohibit web scraping.

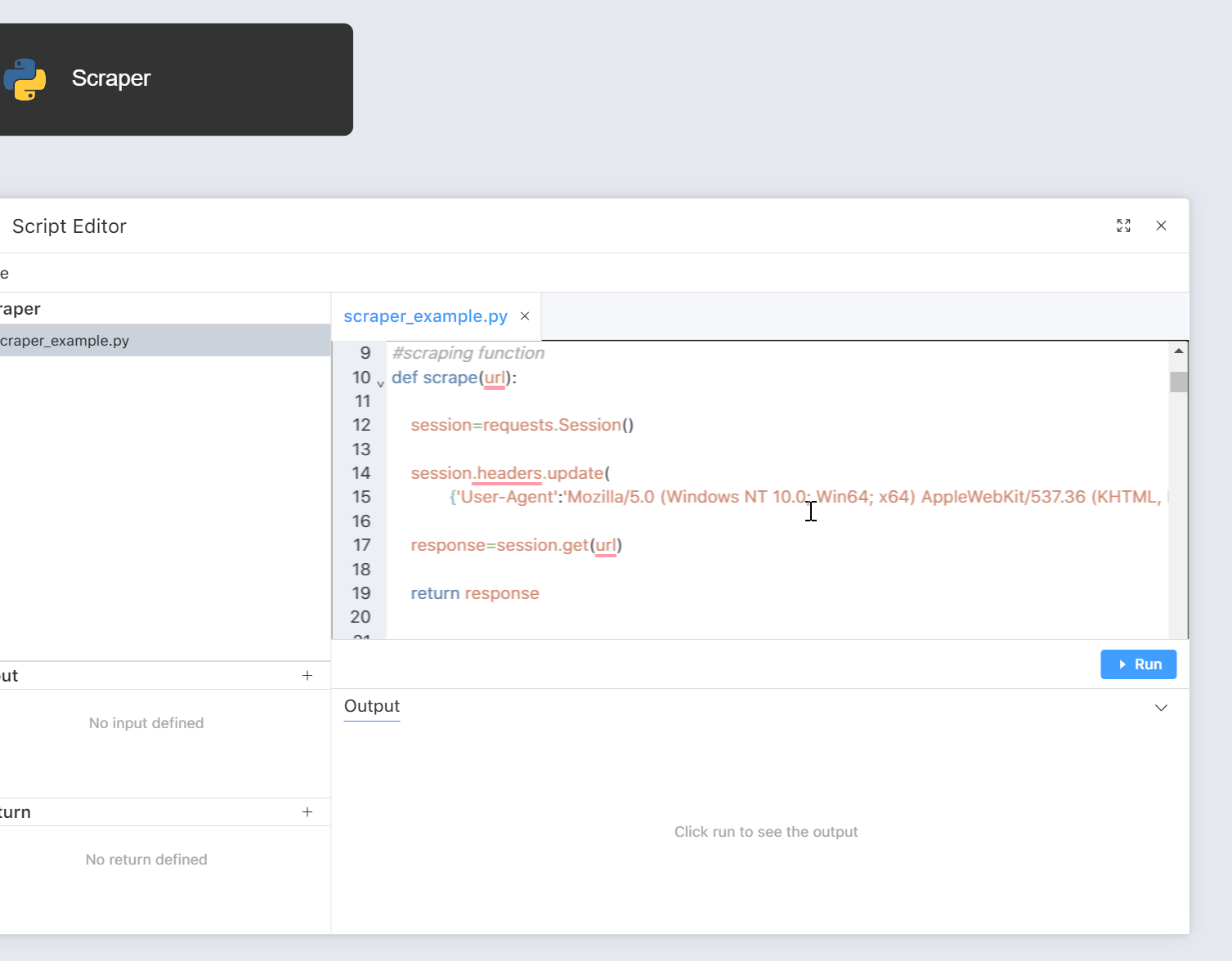

2. Write a scraper

The next step is to use programming languages, such as Javascript or Python, to extract the data from the website. The code will usually involve sending requests to the website, parsing the HTML content, and extracting the relevant data using CSS selectors or XPath expressions.

3. Host your scraper with a dedicated server

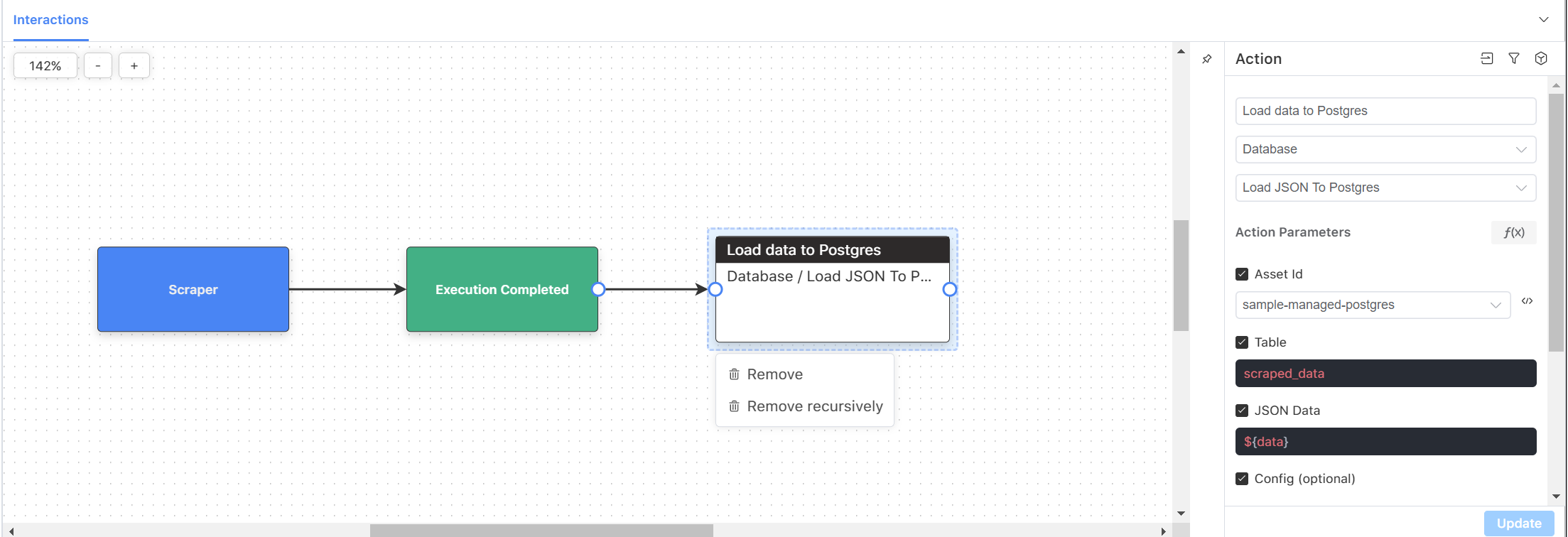

Once finishing your scraper script, you can find a tool, such as Acho, to host your scraper script on a server. This step ensures that your scraper is always running and collecting data, even if your computer is turned off. Moreover, running a scraper may require high computing power, and hosting it on a dedicated server is faster and more efficient. On Acho, you can drag a script node, such as a Python node, from the right Create panel and upload your script within the script node. Another way is to use Acho SDK to deploy your scraper to Acho.

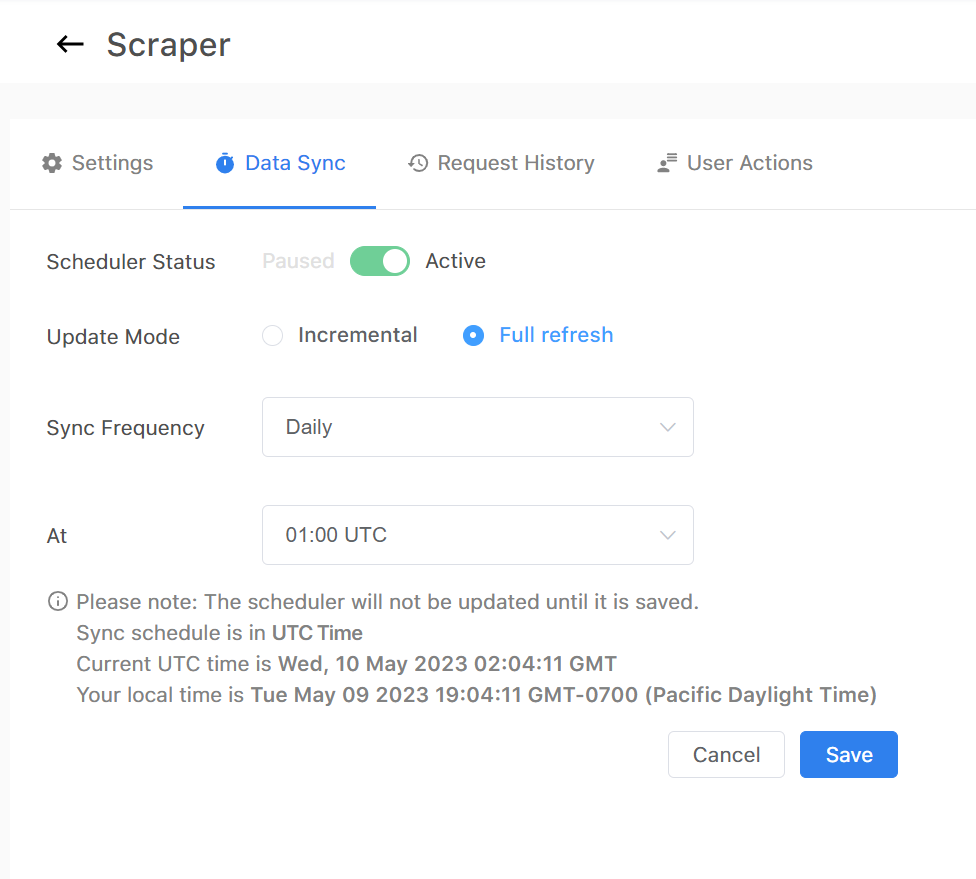

4. Set up a scheduler for your scraper

Use a task scheduler, such as Crontab, to schedule the scraper to run at regular intervals or as needed. On Acho, you can use the built-in feature to set up the scheduler.

5. Store the scraped data:

Once the data has been extracted, you can store it in a file or database for later use, or process it further, depending on your requirements.

6. Build an application to utilize the data

Once automating your scraper to collect data, you can build an interface based on your scraped data. Here are some examples of applications or analyses that you can build:

- Sentiment analysis: Web scraping can be used to extract customer reviews, feedback, and social media posts related to a particular product or service. This data can then be analyzed using sentiment analysis techniques to gauge customer sentiment and improve customer experience.

- Predictive modeling: Web scraping can be used to extract historical data on sales, customer behavior, or other relevant data points. This data can then be used to create predictive models that can help businesses forecast future trends, identify potential risks, and make informed decisions.

- Competitive analysis: Web scraping can be used to extract data on competitors, such as pricing, product information, and marketing strategies. This data can then be analyzed to identify competitive advantages and develop effective strategies.

- Marketing analytics: Web scraping can be used to extract data on website traffic, search engine rankings, and social media metrics. This data can then be analyzed to identify patterns and trends, and develop effective marketing strategies.

- Supply chain optimization: Web scraping can be used to extract data on suppliers, shipping times, and inventory levels. This data can then be analyzed to optimize supply chain operations and reduce costs.

Overall, web scraping can provide businesses with valuable data for various data analytics purposes. If you’re interested in automating your scraper, we are happy to help you learn more about it. Contact us in the chat box on the bottom right corner of this page if you have any questions!

- Schedule a Discovery Call

- Chat with Acho: Chat now

- Email us directly: contact@acho.io

>>

How to pull data from an API?

>>

How to Create a Dashboard From Multiple Source APIs?

>>

How to Build a Web Dashboard Without Hosting a Server